Multiple comparisons problem

In statistics, the multiple comparisons, multiplicity or multiple testing problem occurs when one considers a set of statistical inferences simultaneously[1] or estimates a subset of parameters selected based on the observed values.[2]

The larger the number of inferences made, the more likely erroneous inferences become. Several statistical techniques have been developed to address this problem, for example, by requiring a stricter significance threshold for individual comparisons, so as to compensate for the number of inferences being made. Methods for family-wise error rate give the probability of false positives resulting from the multiple comparisons problem.

History

[edit]The problem of multiple comparisons received increased attention in the 1950s with the work of statisticians such as Tukey and Scheffé. Over the ensuing decades, many procedures were developed to address the problem. In 1996, the first international conference on multiple comparison procedures took place in Tel Aviv.[3] This is an active research area with work being done by, for example Emmanuel Candès and Vladimir Vovk.

Definition

[edit]

30 samples of 10 dots of random color (blue or red) are observed. On each sample, a two-tailed binomial test of the null hypothesis that blue and red are equally probable is performed. The first row shows the possible p-values as a function of the number of blue and red dots in the sample.

Although the 30 samples were all simulated under the null, one of the resulting p-values is small enough to produce a false rejection at the typical level 0.05 in the absence of correction.

Multiple comparisons arise when a statistical analysis involves multiple simultaneous statistical tests, each of which has a potential to produce a "discovery". A stated confidence level generally applies only to each test considered individually, but often it is desirable to have a confidence level for the whole family of simultaneous tests.[4] Failure to compensate for multiple comparisons can have important real-world consequences, as illustrated by the following examples:

- Suppose the treatment is a new way of teaching writing to students, and the control is the standard way of teaching writing. Students in the two groups can be compared in terms of grammar, spelling, organization, content, and so on. As more attributes are compared, it becomes increasingly likely that the treatment and control groups will appear to differ on at least one attribute due to random sampling error alone.

- Suppose we consider the efficacy of a drug in terms of the reduction of any one of a number of disease symptoms. As more symptoms are considered, it becomes increasingly likely that the drug will appear to be an improvement over existing drugs in terms of at least one symptom.

In both examples, as the number of comparisons increases, it becomes more likely that the groups being compared will appear to differ in terms of at least one attribute. Our confidence that a result will generalize to independent data should generally be weaker if it is observed as part of an analysis that involves multiple comparisons, rather than an analysis that involves only a single comparison.

For example, if one test is performed at the 5% level and the corresponding null hypothesis is true, there is only a 5% risk of incorrectly rejecting the null hypothesis. However, if 100 tests are each conducted at the 5% level and all corresponding null hypotheses are true, the expected number of incorrect rejections (also known as false positives or Type I errors) is 5. If the tests are statistically independent from each other (i.e. are performed on independent samples), the probability of at least one incorrect rejection is approximately 99.4%.

The multiple comparisons problem also applies to confidence intervals. A single confidence interval with a 95% coverage probability level will contain the true value of the parameter in 95% of samples. However, if one considers 100 confidence intervals simultaneously, each with 95% coverage probability, the expected number of non-covering intervals is 5. If the intervals are statistically independent from each other, the probability that at least one interval does not contain the population parameter is 99.4%.

Techniques have been developed to prevent the inflation of false positive rates and non-coverage rates that occur with multiple statistical tests.

Classification of multiple hypothesis tests

[edit]The following table defines the possible outcomes when testing multiple null hypotheses. Suppose we have a number m of null hypotheses, denoted by: H1, H2, ..., Hm. Using a statistical test, we reject the null hypothesis if the test is declared significant. We do not reject the null hypothesis if the test is non-significant. Summing each type of outcome over all Hi yields the following random variables:

| Null hypothesis is true (H0) | Alternative hypothesis is true (HA) | Total | |

|---|---|---|---|

| Test is declared significant | V | S | R |

| Test is declared non-significant | U | T | |

| Total | m |

- m is the total number hypotheses tested

- is the number of true null hypotheses, an unknown parameter

- is the number of true alternative hypotheses

- V is the number of false positives (Type I error) (also called "false discoveries")

- S is the number of true positives (also called "true discoveries")

- T is the number of false negatives (Type II error)

- U is the number of true negatives

- is the number of rejected null hypotheses (also called "discoveries", either true or false)

In m hypothesis tests of which are true null hypotheses, R is an observable random variable, and S, T, U, and V are unobservable random variables.

Controlling procedures

[edit]Graphs are unavailable due to technical issues. There is more info on Phabricator and on MediaWiki.org. |

Multiple testing correction

[edit]

This section may need to be cleaned up. It has been merged from Multiple testing correction. |

Multiple testing correction refers to making statistical tests more stringent in order to counteract the problem of multiple testing. The best known such adjustment is the Bonferroni correction, but other methods have been developed. Such methods are typically designed to control the family-wise error rate or the false discovery rate.

If m independent comparisons are performed, the family-wise error rate (FWER), is given by

Hence, unless the tests are perfectly positively dependent (i.e., identical), increases as the number of comparisons increases. If we do not assume that the comparisons are independent, then we can still say:

which follows from Boole's inequality. Example:

There are different ways to assure that the family-wise error rate is at most . The most conservative method, which is free of dependence and distributional assumptions, is the Bonferroni correction . A marginally less conservative correction can be obtained by solving the equation for the family-wise error rate of independent comparisons for . This yields , which is known as the Šidák correction. Another procedure is the Holm–Bonferroni method, which uniformly delivers more power than the simple Bonferroni correction, by testing only the lowest p-value () against the strictest criterion, and the higher p-values () against progressively less strict criteria.[5] .

For continuous problems, one can employ Bayesian logic to compute from the prior-to-posterior volume ratio. Continuous generalizations of the Bonferroni and Šidák correction are presented in.[6]

Large-scale multiple testing

[edit]Traditional methods for multiple comparisons adjustments focus on correcting for modest numbers of comparisons, often in an analysis of variance. A different set of techniques have been developed for "large-scale multiple testing", in which thousands or even greater numbers of tests are performed. For example, in genomics, when using technologies such as microarrays, expression levels of tens of thousands of genes can be measured, and genotypes for millions of genetic markers can be measured. Particularly in the field of genetic association studies, there has been a serious problem with non-replication — a result being strongly statistically significant in one study but failing to be replicated in a follow-up study. Such non-replication can have many causes, but it is widely considered that failure to fully account for the consequences of making multiple comparisons is one of the causes.[7] It has been argued that advances in measurement and information technology have made it far easier to generate large datasets for exploratory analysis, often leading to the testing of large numbers of hypotheses with no prior basis for expecting many of the hypotheses to be true. In this situation, very high false positive rates are expected unless multiple comparisons adjustments are made.

For large-scale testing problems where the goal is to provide definitive results, the family-wise error rate remains the most accepted parameter for ascribing significance levels to statistical tests. Alternatively, if a study is viewed as exploratory, or if significant results can be easily re-tested in an independent study, control of the false discovery rate (FDR)[8][9][10] is often preferred. The FDR, loosely defined as the expected proportion of false positives among all significant tests, allows researchers to identify a set of "candidate positives" that can be more rigorously evaluated in a follow-up study.[11]

The practice of trying many unadjusted comparisons in the hope of finding a significant one is a known problem, whether applied unintentionally or deliberately, is sometimes called "p-hacking".[12][13]

Assessing whether any alternative hypotheses are true

[edit]

A basic question faced at the outset of analyzing a large set of testing results is whether there is evidence that any of the alternative hypotheses are true. One simple meta-test that can be applied when it is assumed that the tests are independent of each other is to use the Poisson distribution as a model for the number of significant results at a given level α that would be found when all null hypotheses are true.[citation needed] If the observed number of positives is substantially greater than what should be expected, this suggests that there are likely to be some true positives among the significant results.

For example, if 1000 independent tests are performed, each at level α = 0.05, we expect 0.05 × 1000 = 50 significant tests to occur when all null hypotheses are true. Based on the Poisson distribution with mean 50, the probability of observing more than 61 significant tests is less than 0.05, so if more than 61 significant results are observed, it is very likely that some of them correspond to situations where the alternative hypothesis holds. A drawback of this approach is that it overstates the evidence that some of the alternative hypotheses are true when the test statistics are positively correlated, which commonly occurs in practice. [citation needed]. On the other hand, the approach remains valid even in the presence of correlation among the test statistics, as long as the Poisson distribution can be shown to provide a good approximation for the number of significant results. This scenario arises, for instance, when mining significant frequent itemsets from transactional datasets. Furthermore, a careful two stage analysis can bound the FDR at a pre-specified level.[14]

Another common approach that can be used in situations where the test statistics can be standardized to Z-scores is to make a normal quantile plot of the test statistics. If the observed quantiles are markedly more dispersed than the normal quantiles, this suggests that some of the significant results may be true positives.[citation needed]

See also

[edit]- Key concepts

- Family-wise error rate

- False positive rate

- False discovery rate (FDR)

- False coverage rate (FCR)

- Interval estimation

- Post-hoc analysis

- Experimentwise error rate

- Statistical hypothesis testing

- General methods of alpha adjustment for multiple comparisons

- Closed testing procedure

- Bonferroni correction

- Boole–Bonferroni bound

- Duncan's new multiple range test

- Holm–Bonferroni method

- Harmonic mean p-value procedure

- Benjamini–Hochberg procedure

- E-values

- Related concepts

- Testing hypotheses suggested by the data

- Texas sharpshooter fallacy

- Model selection

- Look-elsewhere effect

- Data dredging

References

[edit]- ^ Miller, R.G. (1981). Simultaneous Statistical Inference 2nd Ed. Springer Verlag New York. ISBN 978-0-387-90548-8.

- ^ Benjamini, Y. (2010). "Simultaneous and selective inference: Current successes and future challenges". Biometrical Journal. 52 (6): 708–721. doi:10.1002/bimj.200900299. PMID 21154895. S2CID 8806192.

- ^ "Home". mcp-conference.org.

- ^ Kutner, Michael; Nachtsheim, Christopher; Neter, John; Li, William (2005). Applied Linear Statistical Models. McGraw-Hill Irwin. pp. 744–745. ISBN 9780072386882.

- ^ Aickin, M; Gensler, H (May 1996). "Adjusting for multiple testing when reporting research results: the Bonferroni vs Holm methods". Am J Public Health. 86 (5): 726–728. doi:10.2105/ajph.86.5.726. PMC 1380484. PMID 8629727.

- ^ Bayer, Adrian E.; Seljak, Uroš (2020). "The look-elsewhere effect from a unified Bayesian and frequentist perspective". Journal of Cosmology and Astroparticle Physics. 2020 (10): 009. arXiv:2007.13821. Bibcode:2020JCAP...10..009B. doi:10.1088/1475-7516/2020/10/009. S2CID 220830693.

- ^ Qu, Hui-Qi; Tien, Matthew; Polychronakos, Constantin (2010-10-01). "Statistical significance in genetic association studies". Clinical and Investigative Medicine. 33 (5): E266–E270. ISSN 0147-958X. PMC 3270946. PMID 20926032.

- ^ Benjamini, Yoav; Hochberg, Yosef (1995). "Controlling the false discovery rate: a practical and powerful approach to multiple testing". Journal of the Royal Statistical Society, Series B. 57 (1): 125–133. JSTOR 2346101.

- ^ Storey, JD; Tibshirani, Robert (2003). "Statistical significance for genome-wide studies". PNAS. 100 (16): 9440–9445. Bibcode:2003PNAS..100.9440S. doi:10.1073/pnas.1530509100. JSTOR 3144228. PMC 170937. PMID 12883005.

- ^ Efron, Bradley; Tibshirani, Robert; Storey, John D.; Tusher, Virginia (2001). "Empirical Bayes analysis of a microarray experiment". Journal of the American Statistical Association. 96 (456): 1151–1160. doi:10.1198/016214501753382129. JSTOR 3085878. S2CID 9076863.

- ^ Noble, William S. (2009-12-01). "How does multiple testing correction work?". Nature Biotechnology. 27 (12): 1135–1137. doi:10.1038/nbt1209-1135. ISSN 1087-0156. PMC 2907892. PMID 20010596.

- ^ Young, S. S., Karr, A. (2011). "Deming, data and observational studies" (PDF). Significance. 8 (3): 116–120. doi:10.1111/j.1740-9713.2011.00506.x.

{{cite journal}}: CS1 maint: multiple names: authors list (link) - ^

Smith, G. D., Shah, E. (2002). "Data dredging, bias, or confounding". BMJ. 325 (7378): 1437–1438. doi:10.1136/bmj.325.7378.1437. PMC 1124898. PMID 12493654.

{{cite journal}}: CS1 maint: multiple names: authors list (link) - ^ Kirsch, A; Mitzenmacher, M; Pietracaprina, A; Pucci, G; Upfal, E; Vandin, F (June 2012). "An Efficient Rigorous Approach for Identifying Statistically Significant Frequent Itemsets". Journal of the ACM. 59 (3): 12:1–12:22. arXiv:1002.1104. doi:10.1145/2220357.2220359.

Further reading

[edit]- F. Bretz, T. Hothorn, P. Westfall (2010), Multiple Comparisons Using R, CRC Press

- S. Dudoit and M. J. van der Laan (2008), Multiple Testing Procedures with Application to Genomics, Springer

- Farcomeni, A. (2008). "A Review of Modern Multiple Hypothesis Testing, with particular attention to the false discovery proportion". Statistical Methods in Medical Research. 17 (4): 347–388. doi:10.1177/0962280206079046. hdl:11573/142139. PMID 17698936. S2CID 12777404.

- Phipson, B.; Smyth, G. K. (2010). "Permutation P-values Should Never Be Zero: Calculating Exact P-values when Permutations are Randomly Drawn". Statistical Applications in Genetics and Molecular Biology. 9: Article39. arXiv:1603.05766. doi:10.2202/1544-6115.1585. PMID 21044043. S2CID 10735784.

- P. H. Westfall and S. S. Young (1993), Resampling-based Multiple Testing: Examples and Methods for p-Value Adjustment, Wiley

- P. Westfall, R. Tobias, R. Wolfinger (2011) Multiple comparisons and multiple testing using SAS, 2nd edn, SAS Institute

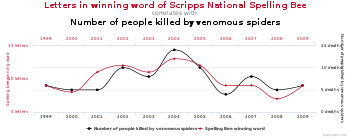

- A gallery of examples of implausible correlations sourced by data dredging

- [1] An xkcd comic about the multiple comparisons problem, using jelly beans and acne as an example